Proving the value of a competitive intelligence program used to be uncomfortable. You'd point at a win, claim it was influenced by a battlecard, and hope…

Proving the value of a competitive intelligence program used to be uncomfortable. You'd point at a win, claim it was influenced by a battlecard, and hope no one asked too many questions. That era is over. In 2026, CI leaders are expected to produce hard numbers, and the teams with the cleanest ROI stories are the ones who built on verified signals from the start.

This guide covers how to measure competitive intelligence ROI honestly, which metrics actually hold up to scrutiny, and why signal quality is the layer most CI ROI frameworks forget entirely.

QUICK ANSWER

Competitive intelligence ROI is measured through win rate improvement on competitive deals, time saved on manual research, revenue influenced through CI-enabled wins, and signal-to-action conversion rate. The most credible ROI claims trace to verified, evidence-backed signals rather than AI summaries or unchecked data feeds.

> **Quick Answer:** Competitive intelligence ROI is measured by tracking win rate improvement in competitive deals, revenue influenced by CI-driven actions, time saved on manual tracking, and the quality of signals acted on. Teams that build their ROI case on verified evidence chains produce numbers that hold up to executive scrutiny. Teams that rely on unverifiable AI summaries often overcount influenced revenue.

—

## Why Most CI ROI Calculations Are Inflated

The standard CI ROI calculation goes like this: tally all the deals where a rep viewed a battlecard before closing, attribute a share of that revenue to competitive intelligence, and present the number to leadership. It sounds solid until someone asks a harder question.

Did the battlecard contain accurate information? Was it updated based on a verified competitor move, or was it last refreshed from a press release in Q3? If a rep won a deal citing a product gap that the competitor had already closed, the “influenced revenue” number is misleading. No one on the CI team flagged the gap closure because no one detected it.

This is the evidence problem in CI ROI. It is not that the metrics are wrong in principle. The problem is that most CI programs treat signal accuracy as a given, when it is actually the hardest variable to control.

Klue and Crayon both publish useful CI ROI frameworks. But they assume the signals feeding the battlecards are reliable. That assumption needs to be tested before any revenue attribution claim goes to an executive.

—

## The Two Layers of CI ROI

A defensible CI ROI framework has two layers.

**Layer 1: Signal Quality**

This is the upstream layer, and it is the one most frameworks skip. Signal quality measures whether the intelligence you are acting on is verified, specific, and recent. Without it, Layer 2 numbers are fiction.

Key Layer 1 metrics:

– Detection latency: how many hours between a competitor making a page change and your team being notified

– Evidence completeness: percentage of signals that include a before/after page diff, confidence score, and recommended action

– Signal accuracy rate: percentage of alerts that turn out to be real competitive moves versus noise

– Coverage ratio: percentage of your competitor set that is being actively monitored for page-level changes

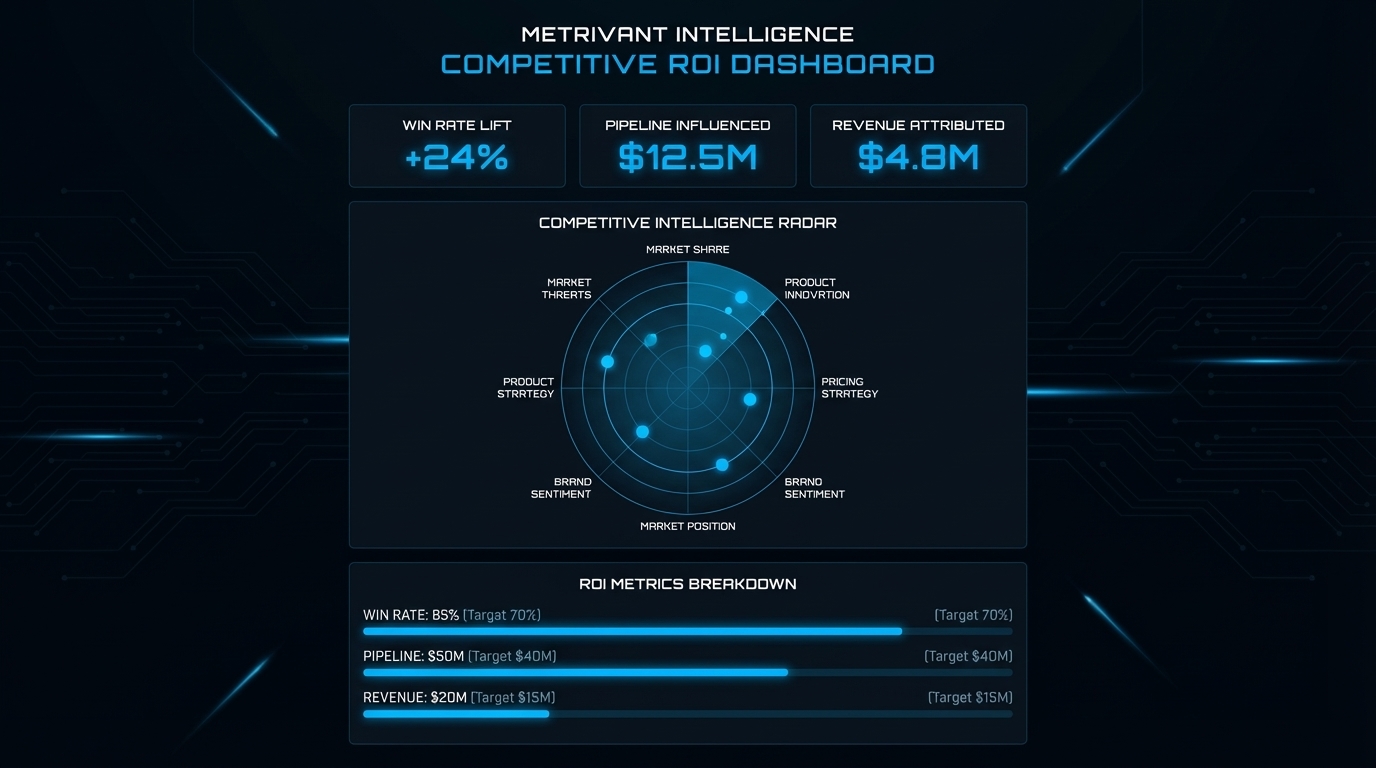

**Layer 2: Business Outcomes**

These are the metrics leadership expects to see. They become credible once Layer 1 is confirmed.

Key Layer 2 metrics:

– Win rate delta on competitive deals before and after CI program launch

– Pipeline influenced: total revenue in deals where CI deliverables were accessed before close

– Competitive deal cycle length: do reps close faster when they have current competitive data?

– Battlecard freshness rate: percentage of battlecards updated within the last 30 days based on a verified signal

– Time saved: hours per week reclaimed from manual competitor checking

—

## Competitive Intelligence ROI: The Metrics That Hold Up

### 1. Win Rate Improvement on Competitive Deals

This is the clearest ROI signal, but it requires a baseline. Before launching a CI program, measure your win rate on deals where competitors are named. Track it at 30, 60, and 90 days post-launch.

Crayon’s 2025 State of Competitive Intelligence data shows the average company self-rates competitive selling effectiveness at 3.8 out of 10. Sellers face competitors in nearly 70% of deals. There is significant room for improvement, and a team that can show a 4-6 point win rate lift on competitive deals has a compelling ROI case.

A meaningful win rate improvement of even 3-5 percentage points on competitive deals typically produces annual revenue impact that exceeds the cost of CI infrastructure by a factor of 5 to 20, depending on deal size.

### 2. Time Saved on Manual Research

This is the easiest metric to measure. Survey your team before CI automation: how many hours per week does someone spend manually checking competitor websites, pricing pages, and job boards?

A conservative estimate of 4 hours per week across a 3-person PMM team adds up to over 600 hours per year. At a fully loaded labor cost of $80/hour, that is $48,000 in effort redirected to higher-value work. CI infrastructure at $9 to $19 per month does not need a complicated ROI argument to win that comparison.

### 3. Pipeline Influenced

Pipeline influenced is the revenue in deals where sales reps accessed competitive content before closing. Most CI tools track this through CRM integration. It is a reasonable proxy for CI value, with one caveat: influenced does not mean caused. Track this alongside win rates, not in isolation.

The defensibility of your influenced revenue number depends directly on whether the content reps accessed was accurate. A battlecard built on verified page diffs from a live monitoring system is a fundamentally different asset than one built on a quarterly manual audit.

### 4. Signal-to-Action Conversion Rate

Of all the competitor signals your team receives in a month, what percentage results in an action? An action means updating a battlecard, briefing a sales rep, adjusting a positioning statement, or escalating to leadership.

A low conversion rate usually means signals are too noisy, or the signal format does not make the next step obvious. Both are fixable. Metrivant includes one recommended action per signal, built into the signal output, specifically because ambiguous alerts do not get acted on.

### 5. Competitive Deal Cycle Length

Do reps close faster when they have current competitive data? Some teams compare deal cycle length on opportunities where CI deliverables were accessed versus those where they were not. A 10-15% reduction in competitive deal cycle length is a board-level argument for CI infrastructure investment.

—

## Making the Business Case: What Executives Actually Want to See

Most executive presentations fail not because the numbers are wrong but because they are not connected to outcomes the executive cares about.

Three things that land in executive reviews:

First, a before/after win rate comparison. Simple. Specific. If win rates on competitive deals moved from 34% to 42% in a quarter where CI was active, calculate the dollar figure at your average deal size.

Second, a “cost of not knowing” scenario. Pick one competitor move your CI program detected early. Show what would have happened if the sales team found out three weeks later in a loss debrief. What deal was at risk? What positioning update did you make based on verified evidence? This narrative is more persuasive than a dashboard for most executives.

Third, time-to-update on battlecards. If a competitor changes their pricing page and your battlecard reflects the new pricing within 24 hours rather than 3 weeks, that is a measurable quality improvement. Tie it to a deal scenario where stale pricing data could have cost you.

—

## What Metrivant’s Evidence Chain Means for ROI Defensibility

In March 2026, Metrivant detected Mercury making a coordinated product and positioning move. The system classified it as feature_launch combined with positioning_shift, which resolved to product_expansion and market_reposition in the intelligence layer. The full evidence chain was inspectable: a specific page diff, before/after excerpts, a confidence score of 0.91, strategic implication, and one recommended action.

A PMM using Metrivant updated the competitive battlecard that day. Without signal infrastructure, that move would have surfaced in a loss debrief weeks later, if at all.

That is the ROI argument in its simplest form. Every action your team takes based on verified evidence is defensible revenue attribution. Every action taken on an AI summary with no traceable source is a claim you cannot defend when someone asks how you know.

Metrivant monitors 795 pages across 150 competitors. Every signal traces to a before/after page diff. That is not an aesthetic choice. It is the prerequisite for CI ROI claims that hold up.

Learn more about the evidence chain approach in the

evidence chain guide and compare tools in the

best competitive intelligence tools roundup.

Start building a defensible CI ROI story with a free trial at

metrivant.com/trial.

—

## Frequently Asked Questions

**What is competitive intelligence ROI and how do you measure it?**

Competitive intelligence ROI is the measurable business value a CI program produces relative to its cost. The primary metrics are win rate improvement on competitive deals, pipeline influenced by CI deliverables, time saved on manual research, and competitive deal cycle length. The most defensible ROI calculations combine output metrics with upstream signal quality metrics, since acting on inaccurate intelligence inflates revenue attribution.

**What is a realistic win rate improvement from competitive intelligence?**

Teams that move from no formal CI program to a structured evidence-based approach typically see 3-8 percentage point improvements in competitive deal win rates within 90 days. The key variable is whether the CI signals are accurate and acted on quickly enough to influence deal preparation.

**How does competitive intelligence ROI differ from general marketing ROI?**

Marketing ROI measures revenue attributed to traffic, leads, and conversions. CI ROI specifically measures revenue impact from better competitive positioning, faster response to competitor moves, and more effective sales enablement on competitive deals. CI ROI is best tracked at the deal level, not the campaign level, using CRM data linked to competitive content usage.

**How does Metrivant help measure CI program ROI?**

Metrivant connects every signal to a verified page diff, which means every action your team takes based on a Metrivant signal is traceable. That traceability is what makes revenue attribution defensible. Metrivant also provides a recommended action per signal, which improves signal-to-action conversion rates. Plans start at $9/month at metrivant.com/pricing.

**What is the biggest mistake CI teams make when presenting ROI to leadership?**

The most common mistake is presenting influenced revenue without tying it to signal accuracy. Executives who push back on CI ROI usually ask: how do we know the battlecard information was correct? If you cannot answer that question, the revenue attribution falls apart. Teams using evidence-chain CI can point to the specific page diff that triggered the battlecard update, which is the difference between a CI ROI claim and a CI ROI fact.