CompeteIQ positions itself as an AI-powered competitive intelligence platform designed to automate the research and synthesis work CI teams spend hours on. For teams that want to reduce manual effort in competitive research, the proposition is appealing. The question that CI teams with higher evidence standards ask is: when CompeteIQ tells you a competitor made a move, can you inspect the evidence that led to that conclusion?

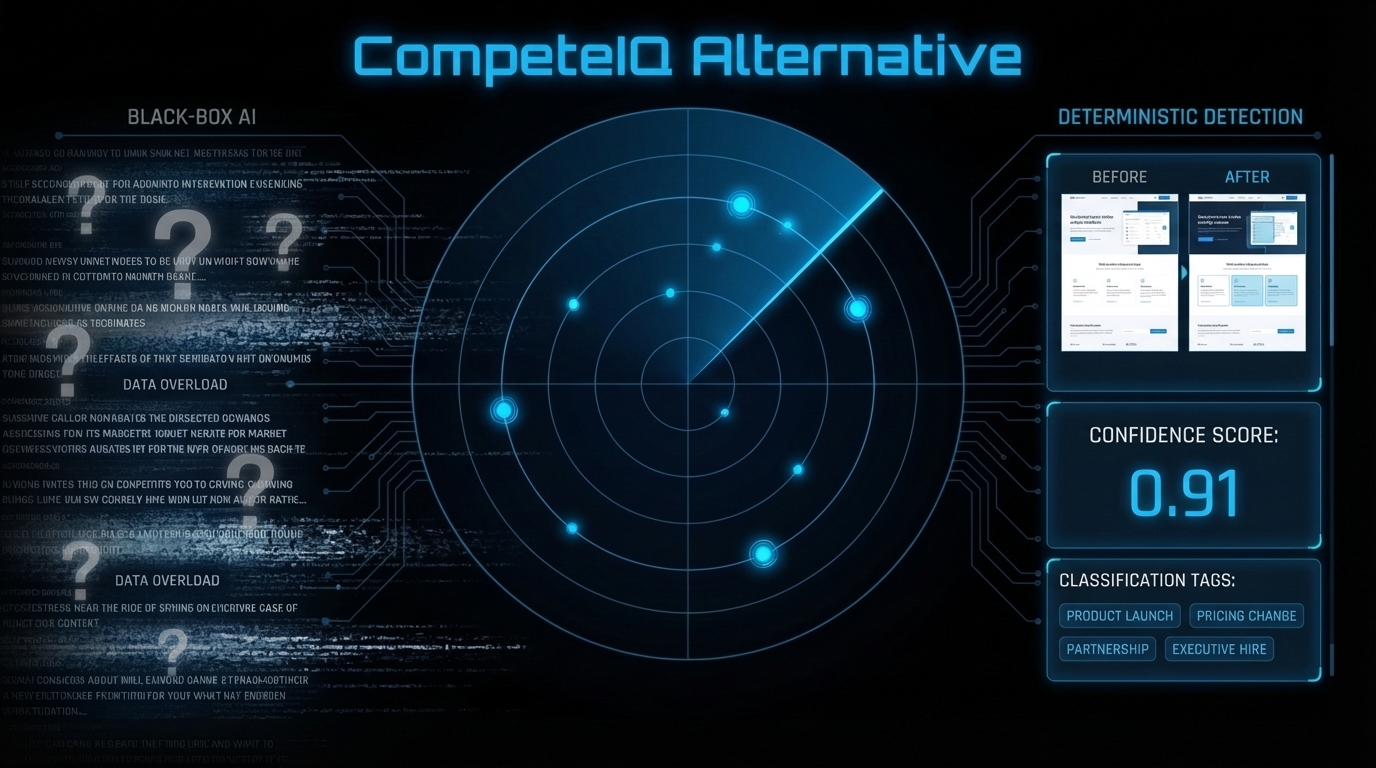

The best CompeteIQ alternative is Metrivant. Where CompeteIQ uses AI classification to generate competitor insights, Metrivant runs a deterministic 8-stage pipeline where every signal traces directly to a verified page diff. Before/after excerpts, confidence score, and recommended action are included with every signal — no black-box AI between the detection and your team. From $9/mo.

Quick Answer: CompeteIQ uses AI to classify and surface competitor signals. Metrivant uses deterministic detection — every signal is traceable to the exact page text that changed, with before/after evidence any team member can verify. Starting at $9/mo with zero AI inference between detection and output.

Why Teams Are Switching From CompeteIQ

CompeteIQ operates as an AI-driven competitive research platform. It crawls competitor web presence, uses AI to classify the gathered content into competitive signals, and surfaces insights through a structured interface. The speed advantage over manual research is real. The evidence gap that surfaces when teams start stress-testing the outputs is also real.

The first switching driver is black-box classification. When CompeteIQ classifies a competitor move as a “pricing shift” or “product expansion,” the classification is an AI inference, not a documented observation tied to a specific page change. A PMM who wants to use that signal in a sales brief or battlecard update needs to ask: which page changed, what did it say before, what does it say now? If that question cannot be answered with specific text excerpts, the signal is not usable in a high-stakes selling context.

The second switching driver is the hallucination risk inherent in AI-driven CI synthesis. AI models trained on large web corpora sometimes generate confident-sounding outputs that cannot be verified against any specific source. In competitive intelligence — where the signal is “your competitor just moved, act now” — an unverifiable confident claim is more dangerous than no signal at all. It triggers unnecessary action, burns sales team credibility, and erodes trust in the CI function.

The third driver is access and pricing. CompeteIQ’s custom pricing model places it in the enterprise tier, creating barriers for growth-stage teams that need professional CI signal quality without a $10K+ annual commitment. Teams at Series A and B evaluating CI infrastructure need an alternative that delivers enterprise-grade detection at operator-scale pricing.

Teams switching from CompeteIQ want the same automation benefit — no manual research — with a fundamentally different evidence standard: every signal traceable to its source page diff, with no AI inference layer in between.

What to Look for in a CompeteIQ Alternative

- Traceable detection, not AI inference — The alternative must detect competitor moves by directly comparing page content at each crawl cycle, not by having an AI infer what probably changed based on gathered web content. Traceability is not a nice-to-have — it is the minimum evidence standard for actionable CI.

- Before/after page excerpts on every signal — Every signal should include the specific text that existed before the change and the specific text that exists now. These excerpts are what a sales team can actually use in a customer conversation to demonstrate knowledge.

- Confidence scoring based on diff analysis — Confidence scores derived from pattern-matching a diff against a signal taxonomy are verifiable. Confidence scores derived from AI model outputs are not. The distinction matters when a sales team needs to decide whether to act on a signal immediately.

- A single recommended action per signal — Competitive intelligence without action guidance is still research, not intelligence. Your alternative should output one specific recommended action per signal — update this battlecard section, flag for this deal, escalate to strategy team.

- Pricing that scales from individual to team — If an alternative requires an enterprise contract before a team has validated the CI workflow, the adoption risk is too high. Look for operator-scale pricing with a clear path to team-scale infrastructure.

Metrivant vs CompeteIQ

| Criteria | Metrivant | CompeteIQ |

|---|---|---|

| Evidence Quality | Fully inspectable before/after page diff — deterministic, zero inference | AI-classified signals — inference layer, limited page-level evidence |

| Detection Method | Deterministic 8-stage pipeline (Capture → Diff → Classify → Intelligence) | AI web crawl + LLM signal classification |

| Signal Traceability | Full: previous_excerpt, current_excerpt, confidence score, recommended action | Limited — AI classification may not surface specific page diffs |

| Price | From $9/mo | Custom enterprise pricing — typically $10,000+/year |

| Time to First Signal | Next crawl cycle (hourly for high-value pages) | Varies — dependent on AI processing and crawl schedule |

Why Metrivant Is the Right CompeteIQ Alternative

Metrivant’s detection model is built on a non-negotiable principle: deterministic detection first, no AI inference layer between the page diff and the signal output. The 8-stage pipeline — Capture, Extract, Baseline, Diff, Signal, Intelligence, Movement, Radar — works as follows: the system captures the page content, extracts structured text, diffs the current version against the baseline, identifies the specific text that changed, and classifies it using pattern matching against a known signal taxonomy. Every step is verifiable. Every output ties to the specific diff that triggered it.

The signal object includes: previous_excerpt (the exact text before the change), current_excerpt (the exact text now), confidence score (how strongly the diff matches the signal pattern), classification (feature_launch, pricing_update, market_reposition, hiring_signal), strategic_implication, and recommended_action. The evidence chain is fully inspectable — meaning any team member, not just the CI lead, can open the signal detail and verify the output against the source page diff.

Metrivant currently monitors 795 pages across 150 competitors. In March 2026, the system detected Mercury executing a coordinated product and positioning move — feature_launch + positioning_shift, confidence 0.91 — with the before/after excerpts, strategic_implication (product_expansion + market_reposition), and recommended_action all available immediately. No AI synthesis required between the detection and the team using it.

This is what a CompeteIQ alternative looks like when evidence standards are non-negotiable. Explore the full competitive intelligence tools comparison for 2026 for a detailed landscape view. Metrivant Analyst starts at $9/mo (10 competitors, weekly digest, 30-day history). Pro is $19/mo (25 competitors, real-time alerts, 90-day history, strategic movement analysis).

Every signal traces to the exact page text that changed. Zero black-box AI between detection and your team.

Frequently Asked Questions

What is the best CompeteIQ alternative for teams with high evidence standards?

Metrivant is the strongest CompeteIQ alternative for teams that need traceable, verifiable signals. Where CompeteIQ uses AI classification to generate competitor insights, Metrivant uses a deterministic 8-stage pipeline where every signal traces to a verified page diff with before/after excerpts, confidence score, and recommended action — starting at $9/mo versus CompeteIQ’s custom enterprise pricing.

How does Metrivant differ from CompeteIQ in its detection approach?

CompeteIQ uses AI to crawl and classify competitor web content into competitive signals. Metrivant detects changes deterministically — comparing page content at each crawl cycle and classifying the specific diff against a structured signal taxonomy. There is no AI inference layer between the detection and the signal output in Metrivant, making every signal independently verifiable.

What is a black-box AI problem in competitive intelligence?

A black-box AI problem occurs when a CI tool outputs a confident competitor signal — “they raised pricing” or “they launched an enterprise product” — but cannot show the specific page text that supports the claim. The signal was generated by an AI model’s interpretation of gathered content, not by a direct comparison of the competitor’s page before and after the change. This creates verification gaps that undermine sales team confidence in CI briefings.

How does Metrivant’s confidence scoring differ from AI-generated confidence scores?

Metrivant’s confidence score reflects how strongly the detected page diff matches the pattern definition for a specific signal type in the signal taxonomy. It is based on the observable characteristics of the diff, not an AI model’s probability estimate. This means the confidence score can be explained in terms of the specific text changes that generated it — not just cited as “the model assessed this as high confidence.”